The business landscape has always been unpredictable, but today’s disruptions arrive faster and hit harder. Pandemics, supply chain shocks, AI breakthroughs, and geopolitical shifts now unfold all at once. Market dynamics that once evolved over years can transform in a single quarter.

Technologies reach mass adoption overnight, and by the time traditional data strategies deliver insights, the world has already moved on. Most follow a familiar but outdated playbook, assess, design, implement, operate, assuming stability that no longer exists. The result is rigid architectures, restrictive governance, and bottlenecks that turn infrastructure into a liability.

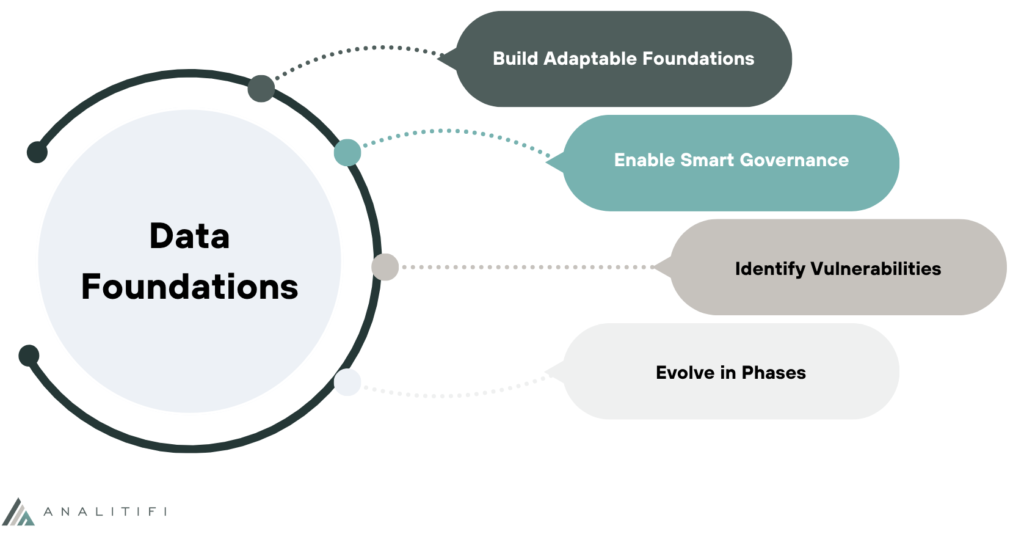

A resilient data strategy takes a different path. Instead of predicting every future, it builds the capacity to adapt to any. It favors flexibility over perfection, distributes capabilities across the organization, preserves optionality, and continuously challenges assumptions.

This post shares a practical framework for building that resilience, with steps to identify vulnerabilities, design adaptive architectures, and turn uncertainty into competitive advantage.

Understanding the Landscape of Uncertainty

Today’s organizations face uncertainty from every direction, and these forces don’t arrive one at a time, they collide and amplify each other. Market volatility driven by inflation, interest rate swings, and shifting consumer sentiment can upend revenue forecasts within a quarter. Technological disruption accelerates relentlessly, generative AI went from curiosity to boardroom priority in months, forcing companies to rethink everything from customer service to software development.

Regulatory changes cascade across industries, data privacy laws like GDPR and CCPA created compliance nightmares for global operations, while emerging AI regulations threaten to reshape how companies can even use their data. Meanwhile, global events from pandemics to geopolitical conflicts to climate disasters don’t just create one-time shocks; they fundamentally alter supply chains, workforce models, and customer expectations in ways that persist for years.

The fundamental problem is timing. Data strategies operate on 18-36 month planning cycles while the world changes on a weekly basis. Your carefully architected data warehouse was designed for last year’s business model. Your vendor selections were made before new technologies emerged. Your governance policies were written for regulatory requirements that have since evolved. By the time you’ve migrated your data to a new platform, the business questions you built it to answer are no longer the ones keeping executives awake at night. This mismatch isn’t a failure of planning, it’s a structural impossibility. You cannot plan fast enough to keep pace.

The casualties are real and instructive. Retail giants who built centralized data warehouses optimized for in-store analytics found themselves paralyzed when e-commerce exploded during COVID-19, their systems couldn’t handle the volume, velocity, or variety of digital customer data. Financial institutions that hard-coded regulatory rules into their data pipelines faced millions in penalties when regulations changed faster than they could redeploy code. Manufacturing companies dependent on single-source supplier data watched their entire demand forecasting collapse when geopolitical tensions disrupted those partnerships overnight.

In each case, the data strategy itself became a vulnerability. Systems designed for efficiency became brittle. Architectures optimized for known use cases couldn’t accommodate unknown ones. Organizations discovered too late that their competitive advantage had calcified into competitive liability, not because they made bad decisions, but because they made decisions that couldn’t be unmade quickly enough when the world shifted.

Core Principles of Resilient Data Strategy

Building resilience requires abandoning the pursuit of the “perfect” data architecture in favor of systems designed to evolve. Flexibility means choosing modular components over monolithic platforms, APIs over hard integrations, and cloud-native architectures that can scale up or down as needs change. It means accepting that your initial design will be wrong in ways you can’t predict, so you build for easy modification rather than permanence.

Rigid architectures, no matter how elegant, become technical debt the moment business conditions shift. Adaptable systems may feel incomplete or “good enough,” but they preserve your ability to pivot when the next disruption arrives. Similarly, distributed decision-making breaks the centralized data team bottleneck by democratizing access and building literacy across the organization. When analysts, product managers, and operational teams can safely access and interpret data themselves, your organization can respond to opportunities in days instead of waiting weeks for ticket queues to clear. This doesn’t mean chaos, it means investing in self-service tools, clear documentation, and training that empowers people while maintaining governance guardrails.

Redundancy and fault tolerance aren’t luxuries, they’re survival mechanisms. This means maintaining multiple paths to critical insights, diversifying data sources so you’re never completely dependent on a single vendor or system, and building automated failovers for when (not if) things break. It means regular backups, disaster recovery plans that you actually test, and architectural patterns that degrade gracefully under stress rather than collapsing entirely. Equally important is treating your strategy as a living document that evolves continuously rather than a static plan carved in stone. Quarterly strategy reviews, rapid experimentation cycles, and feedback loops that surface what’s working and what’s not become core operational practices. You’re not building a cathedral that will stand for centuries, you’re cultivating a garden that needs constant tending.

Finally, value-focused prioritization keeps you honest about what actually matters. It’s easy to get seduced by technical elegance, the perfectly normalized data model, the cutting-edge tool, the comprehensive data catalog, but resilience comes from ruthlessly focusing on business outcomes: Which decisions does this data enable? How quickly can we go from question to answer? What happens to the business if this system fails? Technical complexity should serve strategic value, not the other way around. When forced to choose between a sophisticated solution that takes months to implement and a simpler approach that delivers value next week, resilient strategies bias toward speed and learning.

Building and Implementing Resilience: A Practical Frameworkrage

Equally critical is governance that enables rather than restricts. Automated compliance and quality checks should catch issues without slowing teams down. Clear ownership models create accountability without bottlenecks, and policies must balance control with the freedom to experiment.

Before building anything new, assess where you’re vulnerable today. Map your dependencies to identify single points of failure: Which vendor outage would cripple operations? Which data pipeline breaking would leave executives flying blind? Which team member leaving would create knowledge gaps? Conduct an honest capability gap assessment, where does your current strategy lack the flexibility, redundancy, or speed you need? This vulnerability analysis isn’t about finding blame; it’s about understanding your starting point and prioritizing where resilience investments will have the greatest impact.

Design resilience in phases, not through a single replatforming. Start with quick wins that show value fast, adding a backup data source, automating quality alerts, or enabling self-service for high-demand datasets. Pair these with longer-term architectural changes that deepen adaptability. Budget intentionally for flexibility. Fund experimentation, plan for tech refreshes, and maintain the capacity to pivot quickly. Measure real progress, track recovery time, onboarding speed for new data sources, decision latency, and results from regular resilience audits. These indicators reveal whether you’re truly becoming more resilient or just adding complexity.

Conclusion

Resilient data strategies aren’t about perfect predictions, they’re about adaptable systems, distributed capabilities, smart redundancy, and constant evolution. They value flexibility over perfection, enable agility through governance, and stay focused on business outcomes, not technical ideals.

Disruption is inevitable. The advantage isn’t in predicting what’s next, but in building the capacity to sense and respond faster than others. Resilient organizations adapt quickly, experiment safely, and turn uncertainty into opportunity, while rigid ones scramble to catch up.

Start small. Map one system’s dependencies and find its weak points. Add a backup data source. Decentralize one workflow. Automate one quality check. Each small step compounds your resilience. The goal is continuous evolution toward greater strength, speed, and adaptability.

In a world where volatility is the new normal, building a resilient data strategy is just the beginning. If you’re looking to strengthen your organization’s ability to adapt, innovate, and stay competitive, explore “Future-Proof Data Strategy: Key Steps for Long-Term Success” for a deeper look at long-range planning. You might also find “Unlocking Organizational Success with a Comprehensive Data Strategy” valuable for understanding how data maturity ties directly to business outcomes and for teams focused on turning resilience into real impact, “Bridging the Gap Between Data and Business Value” offers practical guidance on ensuring your data efforts consistently move the needle.

These articles expand on the core themes of adaptability, alignment, and strategic clarity that drive durable data strategies.

Ready to build a more resilient data strategy?

Analitifi helps organizations assess vulnerabilities, design adaptive architectures, and implement practical resilience frameworks that deliver value quickly. Whether you’re starting from scratch or need to retrofit existing systems for greater agility, our team can guide you through the transformation.

Contact Analitifi today to discuss how we can help you turn uncertainty into competitive advantage.